The Difference Between Businesses That Win With AI and Businesses That Just Talk About It

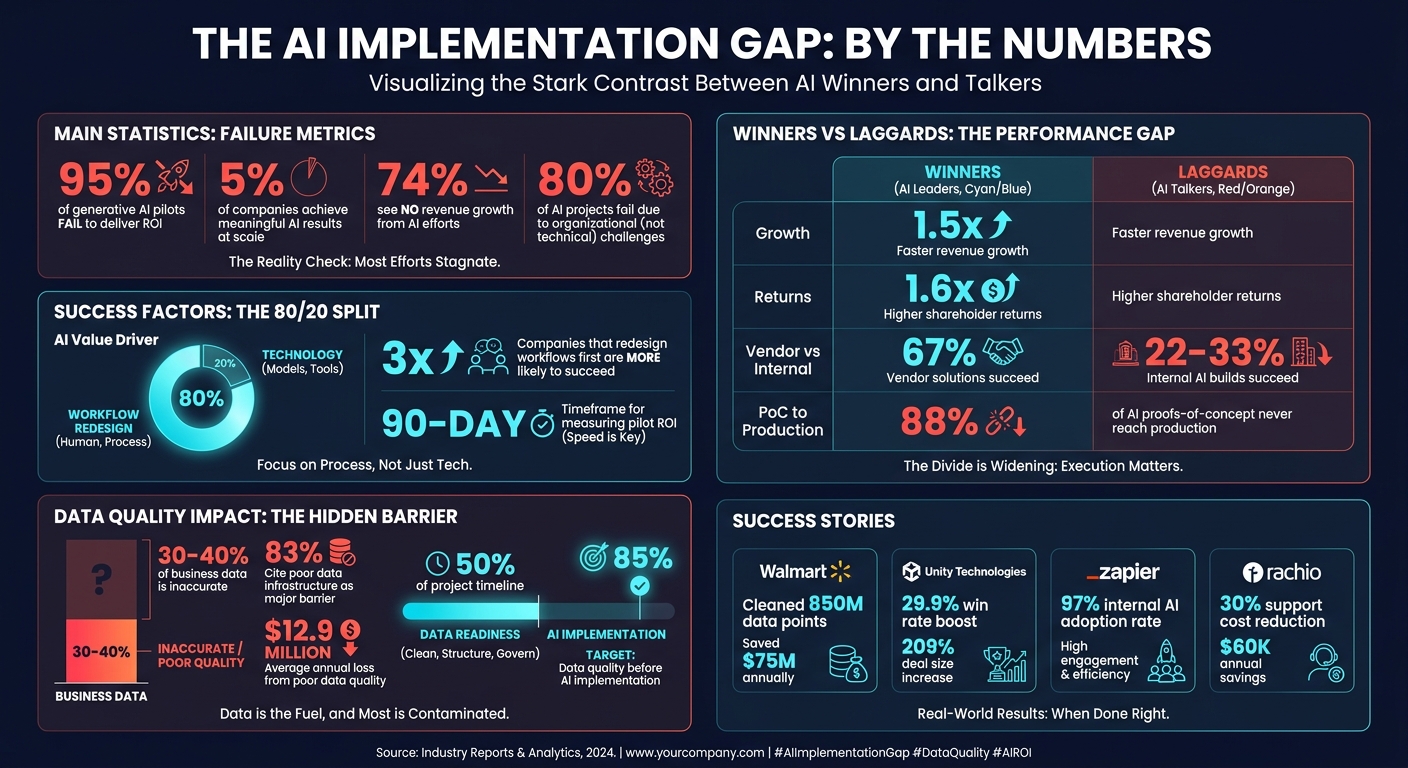

Most companies fail at AI because they start backward - focusing on flashy tools instead of clear problems. The result? 95% of generative AI pilots fail to deliver ROI, and only 5% of companies achieve meaningful results. Successful businesses take a different approach:

- Start with a problem. Identify bottlenecks costing time or money before choosing tools.

- Focus on financial impact. Measure success in dollars saved or revenue generated, not adoption rates.

- Fix your data first. Poor data quality derails AI projects - spend time ensuring clean, accurate, and integrated data.

- Move fast and test. Launch focused pilots with measurable goals, and don’t hesitate to pivot if results aren’t clear within 90 days.

Companies like Walmart and Zapier are pulling ahead by tying AI to measurable outcomes like cost savings and revenue growth. The gap between AI leaders and laggards is growing. The question is: Will you act before it’s too late?

AI Implementation Success Rates: Winners vs Talkers Statistics

How to Automate Any Business With AI in 3 Steps (Beginner's Guide)

sbb-itb-765bc22

Why Most Businesses Fail at AI Implementation

Many businesses falter with AI because they approach it backward - starting with the technology and then scrambling to find a problem to solve. Surprisingly, 80% of AI projects fail due to organizational challenges, not technical hurdles. The core issue? Diving in without a clear business problem.

Here's the kicker: technology accounts for only 20% of an AI initiative's value. The other 80% depends on rethinking and redesigning workflows. Without this redesign, companies risk automating flawed processes, effectively speeding up inefficiencies. The numbers paint a stark picture: just 5% of companies achieve meaningful results from AI at scale, while 74% see no revenue growth from their efforts. It's not about having better models or bigger budgets - it’s about how companies frame their approach from the start. Missteps here pave the way for further challenges, as outlined below.

AI Projects Without Business Objectives

A common mistake is launching AI projects simply to keep up with competitors, without addressing any specific business bottlenecks. This often leads to "superficial automation" - projects that look impressive but fail to make a dent in the bottom line.

Here’s how it typically unfolds: someone attends a tech conference, gets dazzled by a flashy AI demo, and returns convinced the company needs to "do AI." Budgets are allocated, pilots are launched, but no one can answer a fundamental question: "What financial outcome are we aiming for?" This lack of clarity is why 95% of generative AI pilots fail to deliver measurable ROI - they lack a clear "ROI thesis" that defines whether the project will cut costs, boost revenue, reduce risks, or improve efficiency.

Companies that succeed take a different route. They define objectives from the outset. Take Zapier, for example. In January 2026, CEO Wade Foster spearheaded a "Code Red" initiative to integrate AI into core workflows. The result? A 97% internal AI adoption rate. One standout success was their Support team’s "Sidekick", an AI tool that halved ticket handling times - directly tying AI to measurable operational improvements. The takeaway is simple: if you can’t quantify the expected results in dollars, you won’t know if the project is working.

No Clear Problem Definition

Another major pitfall is failing to define the problem AI is supposed to solve. Without a clear problem statement, teams often end up automating tasks that didn’t need fixing in the first place. In contrast, high-performing organizations are three times more likely to redesign workflows before selecting an AI model. They start by mapping out current processes, pinpointing breakdowns, and calculating the cost of those failures.

On the flip side, companies that choose tools first and hunt for use cases later see much lower success rates. Internal AI builds succeed only 33% of the time in such cases, compared to a 67% success rate when businesses buy specialized tools tailored to well-defined problems. If workflows are undocumented or inefficient to begin with, layering AI on top just automates waste. Without a clearly defined business problem, AI projects are almost guaranteed to fall short.

Poor Data Quality and Disconnected Systems

Even with clear objectives and problem definitions, data issues can derail AI efforts. AI models are only as good as the data they’re trained on, and when that data is incomplete, duplicated, or siloed across systems, the results can be disastrous. In fact, 30–40% of business data is inaccurate, leading AI models to inherit and amplify these errors. For instance, if your CRM or ERP systems are riddled with inconsistent records, the AI might confidently make flawed decisions.

Integration is another hurdle. The average enterprise uses 14 different software systems per workflow, yet only a fraction of these systems have clean, reliable APIs. This can lead to silent failures, where AI performs well in testing but falters in production due to bad data from legacy systems. Unsurprisingly, 83% of organizations cite poor data infrastructure as a major barrier to AI success.

The financial impact of poor data quality is staggering - companies lose an average of $12.9 million annually because of it. High-performing organizations understand this and dedicate significant effort to data readiness. In fact, they often spend 50% of the project timeline preparing data before even touching an AI model.

John Radosta, Principal AI Engineer at Synvestable, emphasizes this point: "If your team hasn't spent at least 50% of the project timeline on data readiness before touching a model, the project is already at risk."

Walmart offers a great example of getting data right. The company used generative AI to clean up over 850 million product data points - a task that would’ve required 100 times the manual workforce. This effort eliminated 30 million unnecessary delivery miles and saved $75 million in just one year. Achieving such results is only possible with clean, well-connected data.

Before starting any AI project, it’s essential to conduct a 4–8 week data audit to ensure your data is complete, accurate, and standardized across systems. Aim for at least 85% data quality before implementing AI.

How to Move From AI Talk to AI Execution

Turning AI discussions into tangible results requires more than just ideas - it demands execution. Many companies struggle to bridge the gap between AI pilots and meaningful outcomes because they fail to connect AI projects to measurable goals or real customer needs. Here's a framework to help ensure your AI initiatives deliver results instead of stalling.

Step 1: Connect AI Projects to Business Metrics

To move beyond theoretical discussions, tie AI efforts directly to business metrics. Start by asking: "What profit or loss metric does this affect?" If you can't identify a financial impact, the project might lack focus. Begin with a review of your current metrics, categorizing them as either technical (e.g., model accuracy or latency) or business-related (e.g., revenue per workflow or cost per transaction). Then, link each metric to a specific business objective.

Successful companies measure financial impact from the start. For example, Unity Technologies implemented Clari's AI revenue platform in 2026 to improve sales forecasting. By analyzing past deal patterns and buyer behavior, the AI flagged at-risk deals early, leading to a 29.9% boost in win rates, a 30.2% drop in slipped deals, and a 209% increase in average deal size.

Set clear benchmarks and a fallback plan: if the return on investment (ROI) isn't evident within 90 days, shift resources elsewhere. Companies that adapt their KPIs for AI are three times more likely to see financial gains compared to those that don't. Also, assign a dedicated product owner to oversee each AI initiative. Committees don't deliver results - product managers do.

Step 2: Map AI to Customer Problems

The best way to start isn't by asking, "What can AI do?" Instead, focus on identifying where your business is losing money or customers. Top-performing companies are three times more likely to redesign workflows before choosing an AI tool. Evaluate workflows based on factors like time spent, error rates, and volume before selecting solutions.

Target processes that are high-volume, low-variance, and have clear success criteria, even if they can tolerate minor inaccuracies. Focus on areas with the highest costs and manual effort. For example, Rachio, a Denver-based smart irrigation company, used this approach in 2026. They identified their Tier 1 technical support as a bottleneck - WiFi troubleshooting and device resets were consuming significant agent time for over one million users. By deploying Crescendo.ai to handle repetitive tasks, while routing complex issues to human agents, they achieved 95–99.8% response accuracy, cut support costs by 30%, and saved $60,000 annually by avoiding seasonal hiring.

Success depends on sequencing: audit workflows, clean up data, document processes, pinpoint automation opportunities, implement solutions, and measure outcomes. Skipping any of these steps risks automating inefficiencies. Internal hackathons can also help surface automation opportunities. For example, Connecteam introduced an AI-powered SDR named "Julian" to make over 120,000 monthly outbound calls, saving $450,000 annually in salaries, reducing no-shows by 73%, and adding $30,000 in monthly revenue per SDR.

Step 3: Define Measurable Use Cases

Once you've identified customer problems, the next step is to craft specific use cases with measurable outcomes. A use case should outline the target user, the problem being addressed, the desired result, and how AI will achieve it. Attach success metrics that matter.

Establish high confidence thresholds (e.g., 90%+) for automated actions, and route low-confidence cases to human review. Companies that include human oversight see 23% higher productivity gains than those relying solely on full automation. A simple rule: if you'd fire a human employee for making the same mistake as the AI, don't fully automate that task.

Take Grammarly as an example. In 2026, they adopted Salesforce Einstein for behavioral lead scoring to streamline their inbound sales funnel. By analyzing product usage and firmographic data, the AI identified "ready-to-buy" leads, resulting in an 80% increase in conversion rates.

Track metrics like "Straight-Through Processing" (STP) - the percentage of cases resolved without human involvement. For document automation, aim for 75–90% STP within six months. Evaluate success by tracking hours saved at full cost or revenue generated per AI-assisted workflow, rather than relying on adoption rates or satisfaction scores alone.

Lastly, establish a feedback loop. Sales or support teams need an easy way to flag incorrect AI outputs. This feedback is critical for retraining models and preventing performance drift.

| Business Objective | Traditional Metric | AI-Driven Metric | Financial Impact |

|---|---|---|---|

| Increase sales | Conversion rate | AI-influenced conversion rate | 15–30% improvement |

| Reduce costs | Operating expenses | Process automation savings | 20–40% reduction |

| Improve satisfaction | NPS score | AI-resolved inquiry rate | 10-point NPS increase |

(Source: AI Metrics Framework, 2026)

The companies that succeed with AI are the ones that measure success in financial terms, tackle real customer issues, and track results obsessively. Everything else is just noise.

What Separates AI Winners From AI Talkers

What makes some companies thrive with AI while others just talk about it? It boils down to three key factors: defining clear problems, having strong data infrastructure, and deploying solutions quickly and iteratively. These elements transform lofty AI ambitions into actionable strategies. In fact, only 5% of companies manage to create tangible value from AI by taking decisive action.

Clear Problem Statements Drive Results

Successful companies start with a focused question like, "Which workflow costs us the most, has the most manual steps, and has clear success criteria?" This kind of clarity turns a vague idea into a specific AI project. On the other hand, asking something broad like, "How can we use AI to transform our business?" often leads to poorly defined initiatives that fail to deliver.

Take Unity Technologies, for example. They identified a clear problem: sales reps were relying on intuition instead of data-driven buyer engagement signals for forecasting. By focusing on precise metrics, they achieved a 29.9% improvement in win rates and a 209% increase in average deal size. Without clear goals, results are just as unclear. Defining problems sharply not only avoids wasted effort but also accelerates progress, turning AI’s potential into real business outcomes.

Data Infrastructure Determines AI Success

A solid data foundation is critical for AI to work effectively. Before diving into AI tools, companies need to audit their data. Are customer records complete? Are systems integrated? Addressing these basics first makes a huge difference - companies that redesign workflows before picking AI tools are three times more likely to see financial gains.

Consider Missouri Star Quilt Company. By leveraging clean product data, integrated order histories, and well-documented support processes, they automated 76% of repetitive customer chats. Without a strong data setup, many organizations hit roadblocks, struggling to connect fragmented systems. Companies that get their data in order first can then move quickly to test and refine their AI solutions.

Deploy Rapidly, Improve Later

Here’s a harsh truth: 88% of AI proofs-of-concept never make it to production because they’re tested in overly ideal conditions instead of messy, real-world scenarios. The best companies test their pilots with the most challenging 20% of cases, exposing potential issues early rather than focusing on perfect "happy path" demos.

A disciplined approach, like setting a 90-day kill switch for pilots that don’t show a clear path to ROI, helps ensure resources aren’t wasted. For instance, when Zapier pushed for AI adoption, CEO Wade Foster declared a "Code Red" and organized hackathons to encourage rapid experimentation. The result? A 97% internal adoption rate.

Another smart move: buying specialized AI tools instead of building them in-house. Vendor solutions succeed 67% of the time, compared to only 22–33% for internal builds. Swan AI, for example, reached nearly $1M in ARR with just three founders by choosing ready-made AI tools over a lengthy, in-house development process.

Meanwhile, companies that hesitate risk falling behind. While one team perfects their pilot, competitors are already deploying, learning, and improving. Decathlon, for example, rolled out AI agents across 2,000+ stores in 56 countries, boosting support-driven revenue by 20%. They didn’t wait for perfection - they acted, measured results, and refined their approach.

"The competitive question is no longer whether to adopt AI, but how to deploy it so the check clears faster. Nothing happens until the check clears." – GetErDone.AI Research Synthesis

At the end of the day, the winners measure success in dollars saved and revenue generated. Meanwhile, those stuck in endless planning focus on adoption rates and user satisfaction. One group is building a business; the other is just building dashboards.

Conclusion

The difference between companies excelling with AI and those merely discussing it boils down to four key factors: precise problem identification, alignment with business goals, reliable data systems, and quick execution. Successful companies don’t waste time chasing trends or creating flashy prototypes. Instead, they focus on pinpointing workflows that drain time and money, tie results to measurable financial metrics, ensure their data is in order before selecting tools, and swiftly test solutions in real-world scenarios.

The numbers tell a stark story: only 5% of enterprise AI projects lead to rapid revenue growth. This highlights that without action, planning alone delivers little value. On the other hand, companies leading in AI adoption experience 1.5x faster revenue growth and 1.6x higher shareholder returns compared to those lagging behind. And that gap? It’s not just persistent - it’s growing for businesses that delay moving from strategy to execution.

To bridge this divide, here’s a clear plan: Choose one high-impact, manual workflow with clear success metrics. Connect it to a financial outcome, like labor costs saved or revenue generated per AI-aided task. Instead of building from scratch, invest in a proven solution. Then, within 90 days, launch a focused pilot that tackles your most challenging cases and measures success in dollar terms - not just adoption rates.

As highlighted in the PwC Global CEO Survey:

"2026 is shaping up as a decisive year for AI... That gap is starting to show up in confidence and competitiveness - and it will widen quickly for those that don't act." – PwC Global CEO Survey

The message is clear: Act now. Delaying could mean falling behind competitors who are already refining their AI strategies. The pressing question is: Will you take action before the gap becomes too wide to close?

FAQs

What’s the first AI use case I should tackle in my business?

Start with automating a task that your team finds repetitive and time-consuming - something that not only frustrates them but also has clear, measurable outcomes. Think about processes like scheduling, drafting emails, or streamlining lead follow-ups and customer support workflows. Prioritize tasks that eat up a lot of time every week but are relatively simple to set up. This approach delivers fast, tangible results and helps create a strong foundation for expanding AI use across your business.

How do I calculate ROI for an AI pilot in plain dollars?

To figure out the ROI for an AI pilot, focus on cost savings and revenue changes directly linked to the project. Start by calculating the monthly expense of the manual process: multiply the hours worked by the hourly rate, then by 4.33 (the average number of weeks in a month). Compare this with the cost of automation, such as software fees.

Next, factor in any revenue increases, like shorter sales cycles, and subtract the ongoing costs of the AI. Finally, determine the net gain over a specific timeframe, such as 6 to 12 months.

What data needs to be cleaned for AI to work effectively?

AI thrives on clean, accurate, and well-organized data to produce dependable results. This means taking steps like eliminating duplicates, fixing errors, standardizing formats, and ensuring the data is complete. Alongside this, having clear and well-documented workflows is just as important. AI works best when it’s enhancing processes that are already well-structured.

If your data quality is poor or your processes are unclear, the results can be inconsistent or misleading. So, before using AI, make sure your data is polished and your workflows are clearly defined to get the most out of your efforts.

Frequently Asked Questions

How do I know if my business is ready to implement AI or still needs groundwork first?

If more than 30% of your business data lives in spreadsheets, duplicated records, or disconnected systems, you're not ready to layer AI on top yet—you'll just automate the mess. Spend 4–8 weeks auditing your data quality and documenting your core workflows before touching any AI tool. Clean inputs are what separate production-ready systems from expensive demos.

Should I build AI tools in-house or buy a specialized solution?

Buy before you build. Vendor-built AI solutions succeed roughly twice as often as internal builds because they're already tested against real-world edge cases. Build custom only when no existing tool solves your specific, well-defined problem—and only after you've validated the use case with a focused pilot.

What does a 90-day AI pilot actually look like in practice?

Pick one high-volume, manual workflow with a clear financial cost attached to it, then deploy a solution against your hardest 20% of cases—not the easy ones. By day 90, you should be measuring dollars saved or revenue generated per workflow, not user satisfaction scores. If you can't show a clear path to ROI by then, kill it and move to the next problem.

How much of my team's time should go toward AI implementation versus their normal work?

Assign one dedicated product owner to each AI initiative—committees kill momentum. Data readiness alone typically consumes 50% of the project timeline before any model is touched, so plan for that load upfront rather than treating it as a side task.

When should a human stay in the loop instead of letting AI handle a task fully?

A simple rule: if you'd fire an employee for making the same mistake the AI might make, don't fully automate that task. Set confidence thresholds of 90% or higher for automated actions and route everything below that threshold to a human reviewer—companies that include human oversight consistently see higher productivity gains than those chasing full automation.