Stop Buying AI Tools. Start Building AI Systems.

Most businesses waste money on generic AI tools that don’t deliver. Why? These solutions are built for broad use, not your specific needs. The result? Manual workarounds, inefficiencies, and missed potential.

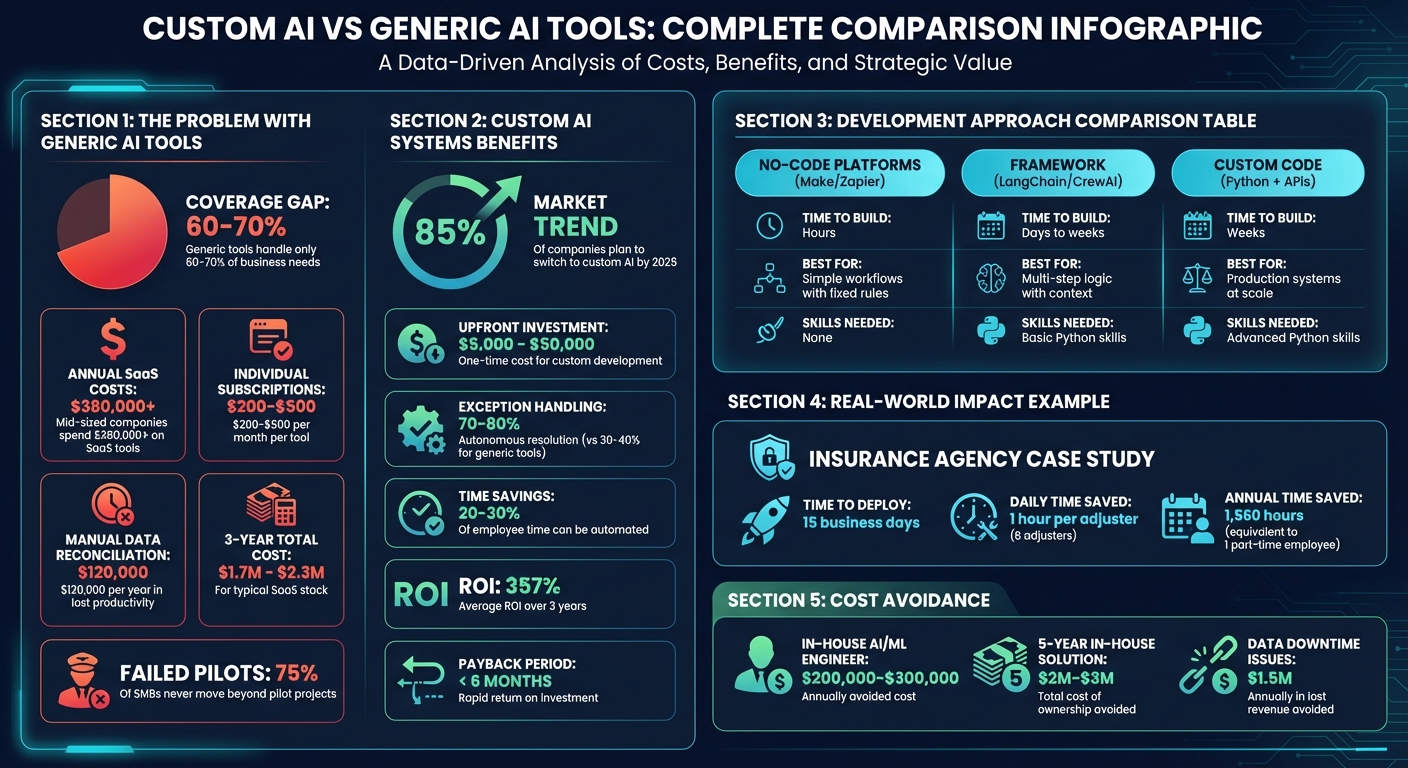

Here’s the solution: Build custom AI systems tailored to your workflows and data. While the upfront cost ranges from $5,000 to $50,000, they eliminate recurring fees, reduce inefficiencies, and leverage your proprietary data for better results.

Key takeaways:

- 85% of companies plan to switch to custom AI by 2026.

- Generic tools often cover only 60–70% of business needs.

- Custom AI systems can save hours of manual work, cutting costs and boosting productivity.

Instead of adapting your business to fit generic tools, create systems that fit your business. Start by identifying repetitive tasks, prioritizing high-impact processes, and choosing the right development approach. Whether through no-code platforms or custom coding, the goal is the same: own your advantage, not just rent tools.

Custom AI vs Generic Tools: Cost Comparison and Business Impact

Why Generic AI Tools Don't Work for Most Businesses

Pre-Built Tools Can't Adapt to Your Workflow

Generic AI tools are built with the "average company" in mind. This makes them ill-suited for businesses with specialized needs. For instance, a law firm may require jurisdiction-specific compliance checks, while a manufacturer might have unique quality control processes. These off-the-shelf solutions typically handle only 60–70% of what businesses need, leaving a 30–40% gap that demands time-consuming adjustments.

Integration is another major hurdle. Many companies rely on a patchwork of API connections to link their tools. When vendors update their APIs or models, it can disrupt essential workflows like order fulfillment or lead management.

"Nothing works automagically... It's deceptively easy to look at some AI agent demos and think 'oh, I can replace my team's huge mess of spaghetti code with a few clever AI prompts!'" - daxfohl, Hacker News

Generic tools also fail to understand your business's internal language, specific deal histories, or the quirks of legacy systems. Your proprietary data is one of your biggest competitive advantages, but off-the-shelf solutions can’t fully utilize it. This often results in generic AI outputs that require constant human intervention, defeating the purpose of automation. These limitations not only reduce operational effectiveness but also add hidden costs to your bottom line.

The Real Cost of Generic Tools

Subscription fees are just one part of the expense. Mid-sized companies typically spend over $380,000 annually on SaaS tools, with individual software subscriptions ranging from $200 to $500 per month for full access. On top of that, manual data reconciliation costs mid-sized teams an additional $120,000 per year in lost productivity.

Customization adds another layer of expense. Consultant fees and inefficiencies from switching between tools can push the three-year total cost of ownership for a typical SaaS stack to somewhere between $1.7 million and $2.3 million. These challenges are why 75% of small and mid-sized businesses experimenting with AI never move beyond pilot projects.

Traditional per-seat pricing models create another problem: an "efficiency penalty." Even as AI agents take on multiple roles, businesses are still charged on a per-user basis. This pricing structure hinders companies from gaining a competitive edge, a need recognized by 85% of businesses seeking customized AI solutions.

sbb-itb-765bc22

How to build AI systems (so your business runs without you)

How to Find AI Opportunities in Your Business

Shifting from off-the-shelf tools to tailored AI solutions starts with identifying automation opportunities hidden within your daily operations.

List Your Repetitive Tasks

Spend time observing a typical workday to see what your team actually does, rather than relying on formal workflows or process guides. For instance, you might notice employees exporting data into CSV files, reformatting it in Excel, and uploading it into another system - a clear sign of manual effort bridging gaps between tools. These are perfect candidates for automation.

Focus on recurring tasks, especially those performed weekly, like sorting incoming emails, consolidating reports, or reviewing contracts. These are tasks where results can be easily checked, making them safer for AI to handle. A good rule of thumb: if you can verify that the AI’s output is correct, it's a strong contender for automation.

Research suggests employees spend 20–30% of their time on tasks that could be automated. Look for these opportunities across departments: sales teams qualifying leads, finance teams reconciling invoices, or support teams managing Tier-1 customer inquiries. Pay special attention to workarounds or unofficial processes employees have created, as these often highlight inefficiencies better than outdated process maps.

Rank Opportunities by Impact

Not every repetitive task is worth automating. To prioritize, group tasks into three categories: Commodity (basic functions like email), Operational (important but not unique processes), and Strategic (tasks directly tied to revenue or competitive advantage). Focus on automating tasks in the strategic category first.

Quantify the "friction tax" of each task by calculating its weekly time cost. Multiply the hours spent on the task by the employee’s hourly rate, and consider the impact of errors or delays. For example, if a finance team spends 15 hours a month manually reconciling data at $50 per hour, that’s $9,000 annually in labor costs alone. Add to that the potential delays in financial reporting or decision-making. Compare this expense with the complexity of automating the task and the quality of available data. Start with high-friction tasks and aim to implement a solution within 4–8 weeks to test its return on investment (ROI).

"Rent commodity functions. Own your competitive advantage. Keep SaaS where differentiation is low; build custom AI where process quality and speed define outcomes." - R-Sun.ai

How to Build a Custom AI System

Once you've identified the tasks that could benefit most from automation, the next step is creating a system tailored to your business needs.

Pick the Right Framework for Your Needs

Thanks to today's tools, building a custom AI system is more approachable than ever. Options range from no-code platforms for basic workflows to custom coding for highly complex systems.

The key is matching your project with the right development approach. For simpler tasks - like triggering Slack notifications when a form is submitted - tools like Make or Zapier can have you up and running in hours. For more nuanced workflows, such as routing leads based on their conversation history, you'll need a framework like LangChain or CrewAI. These allow you to build multi-step processes without starting from scratch. However, if your system needs to handle heavy traffic or integrate with proprietary databases, custom Python code with direct API calls to models like Claude or GPT-4 is your best bet. This approach ensures reliability and scalability for production-grade systems.

Your choice should depend on how much customization you need, how quickly you need results, and the skillset available within your team. A small regional business won’t require the same setup as a large corporation.

| Approach | Time to Build | Best For | Skills Needed |

|---|---|---|---|

| No-code (Make/Zapier) | Hours | Simple workflows with fixed rules | None |

| Framework (LangChain/CrewAI) | Days to weeks | Multi-step logic with context | Basic Python skills |

| Custom Code (Python + APIs) | Weeks | Production systems at scale | Advanced Python skills |

Choosing the right framework is just the starting point. Let’s explore how this plays out in practice.

Example: Building a Lead Qualification Agent

In March 2026, Parker Gawne from Syntora developed a lead qualification agent for a regional insurance agency employing six adjusters. The agency had previously tried using Salesforce Flow but abandoned it after three months due to its inability to handle complex API integrations, such as accessing government data or cross-referencing policy eligibility rules.

To solve this, Parker built a system using Python, LangGraph for workflow orchestration, and the Claude 3 Sonnet API for decision-making. The agent streamlined lead qualification by performing three key tasks: enriching lead data through external APIs (like DMV records and property databases), scoring the leads based on predefined rules, and routing them to the appropriate adjuster in the CRM. The workflow was designed as a state machine, where each step - enrichment, scoring, and routing - was a node in the process. This setup allowed the system to handle asynchronous tasks, like waiting for a government database response, without timing out.

The project was deployed as a Docker container on AWS Lambda, with Supabase Postgres managing the system's state. In just 15 business days, the system was operational, saving each adjuster an hour daily. Over a year, this added up to approximately 1,560 hours - equivalent to the workload of a part-time employee.

What made this system particularly effective was its reliance on first-party data. It was trained on the agency's internal lead scoring criteria, past client conversations, and email templates. This approach gave the agency a competitive edge that generic, off-the-shelf tools simply couldn’t match.

"Real leverage comes when AI becomes part of your infrastructure. When large language models remember your frameworks, your positioning, and your client language."

- Ken Yarmosh.

Integrating and Scaling Your AI System

Connect AI to Your Current Tools

Your AI system is only as effective as its ability to integrate with the tools you already use. This is where many custom AI projects either succeed or become expensive missteps.

Start by identifying the connections your AI needs. For instance, if you've built a lead qualification agent, it should pull data from your CRM, enhance it via external APIs, and push the results back. A straightforward way to test these connections is by using automation platforms like n8n or Make. These platforms serve as middleware, helping you link your AI system to tools like Slack, HubSpot, or Google Sheets - without needing to write custom API code for each integration.

While middleware solutions are great for initial testing, they might not scale well due to limitations like rate throttling or escalating costs. When these issues arise, consider switching to custom solutions on platforms like AWS or GCP. For example, the insurance agency mentioned earlier opted for AWS Lambda to ensure uptime and handle spikes in lead volume without manual intervention.

Another key technical step is creating an abstraction layer between your application logic and the AI provider. Instead of directly calling OpenAI or Claude, your system should route tasks through this layer, which selects the best model for each job. For instance, basic lookups might use GPT-4o-mini to save costs, while more complex tasks are routed to GPT-4. This approach also protects you from vendor lock-in. If another model, like Claude, starts performing better for your needs, you can switch without overhauling your system.

"The abstraction layer is what makes 'blend' a real strategy instead of a mess. It lets you use OpenAI for one task, Claude for another, and a fine-tuned open-source model for a third."

- Last Rev Team

Once your AI is integrated, continuous monitoring is essential to ensure it delivers consistent results.

Test and Monitor Performance

AI systems have unique challenges compared to traditional software. They might produce outputs that seem correct but aren’t, waste resources in endless loops, or even behave unpredictably after a model update. Standard monitoring tools that track uptime and error codes won’t catch these issues.

This is why you need AI-specific observability tools. Platforms like LangSmith or Langfuse allow you to trace every step your AI takes. For example, if a lead is misrouted, you can replay the exact prompt, context, and tool calls that caused the error. This is crucial for debugging non-deterministic systems where the same input can yield different results.

Set up automated evaluations to run whenever you deploy changes. Use a fixed set of test prompts and compare the outputs to a baseline. If the system’s accuracy drops by more than 5%, pause the deployment. For the insurance agency, this process involved running 50 sample leads through the system weekly to ensure consistent scoring logic.

Focus on the metrics that matter most:

- Latency: How quickly the system responds.

- Token cost: The expense of each action.

- Autonomous resolution rate: The percentage of tasks the AI completes without human help.

Custom agents often achieve 70–80% autonomous resolution rates, compared to 30–40% for off-the-shelf tools. If these metrics decline, it’s a sign that something in your data or workflow needs attention.

Organizations that implement robust AI monitoring report an average 357% ROI over three years, with payback periods of less than six months. On the flip side, failing to monitor properly can result in $1.5 million annually in lost revenue due to data downtime and quality issues.

Working with ZipLyne for AI Implementation

What ZipLyne Does Differently

ZipLyne takes a tailored approach to AI, solving workflow challenges that off-the-shelf tools and expensive in-house solutions often fail to address. Many businesses find themselves stuck between hiring costly AI talent or using generic tools that don’t align with their processes. ZipLyne eliminates that dilemma.

Instead of shelling out $200,000–$300,000 annually for an AI/ML engineer or waiting 6–11 months for an internal team to deliver a solution, ZipLyne provides direct access to an expert who builds custom AI systems in just days. No endless meetings. No drawn-out planning phases.

Their focus? Automating repetitive tasks like lead qualification, data entry, and customer support. ZipLyne integrates directly with existing systems like CRMs and ERPs, avoiding the disruptions and inefficiencies caused by data silos or generic solutions. These systems are designed to work with your data and adapt to your unique workflows seamlessly.

With over 150 projects completed and more than $50 million in value created, ZipLyne’s track record speaks for itself. Clients have reported 40–70% reductions in manual work, proving that AI systems tailored to specific workflows deliver real, measurable results.

Results from Real Projects

Companies partnering with ZipLyne have transformed their operations by automating time-consuming tasks, allowing their teams to focus on activities that drive revenue instead of getting bogged down by repetitive processes.

For example, one client avoided spending $2 million to $3 million over five years - the typical total cost of ownership for a custom in-house AI solution. Another client replaced generic AI tools, which often come with recurring costs exceeding $200 per month, with a unified system that connects their tools, processes data in real time, and remains stable even through model updates.

These results come from ZipLyne’s focused execution model. By capping their workload at five active projects at a time, they ensure each client gets the attention they deserve. Project costs typically range from $100,000 to over $500,000, with maintenance retainers between $200 and $5,000 per month. This approach ensures high-quality outcomes while keeping long-term costs manageable.

Conclusion

Generic AI tools are designed with the "average" company in mind - but your business isn’t average. Your workflows - whether it’s qualifying leads, onboarding clients, or closing the books - require more than a cookie-cutter solution.

Off-the-shelf platforms often force you to change how you operate, creating inefficiencies over time. Custom AI systems flip this script. They adapt to your processes, integrate seamlessly with tools like your CRM and ERP, and leverage your proprietary data to handle the edge cases that generic tools often miss. This tailored approach ensures your AI grows alongside your business. In fact, custom systems can handle 70–80% of exceptions, compared to just 30–40% with generic tools.

Beyond operational benefits, custom AI also makes financial sense. SaaS fees tend to balloon as you scale, while custom AI systems have fixed costs that become more economical over time. That’s why 85% of companies are expected to transition to custom AI by 2026 - not because it’s trendy, but because it creates a competitive edge.

The first step? Audit your repetitive tasks and rank them by their impact. Then, pick one high-friction process to automate. You don’t need to overhaul your entire system overnight. Automating even one workflow reduces subscription costs, eliminates workarounds, and gives you a leg up on the competition.

"Rent the commodity. Own the advantage." – R[AI]SING SUN

FAQs

How do I choose the first workflow to automate with AI?

When tackling automation, begin with a task that’s repetitive and eats up a lot of time - one with clear, measurable results. Focus on areas where manual processes cause delays or errors, such as data entry or customer support. Start small with something straightforward, like automating email responses. Make sure the process is trackable so you can measure improvements in efficiency or accuracy. This way, you can show quick wins, proving its value and paving the way for future automation efforts.

What data do I need before building a custom AI system?

To create a custom AI system that fits your needs, start by collecting data that mirrors your specific operations. This could include internal workflows, industry-specific terminology, legacy system data, and customer information. The goal is to align the AI with your processes so it can deliver meaningful results.

It's also important to evaluate your existing data infrastructure. Look at the quality of your data and how easily it can be accessed. This will help you plan for seamless integration and effective training. Using tailored data not only boosts accuracy but also enhances the system's overall performance.

How do I prevent vendor lock-in when using AI models?

To steer clear of vendor lock-in with AI models, it's smart to use open-source frameworks and retain ownership of your model weights. This approach gives you control over your data and workflows, cutting down on reliance on proprietary APIs. By creating a unified AI gateway that incorporates open-source models, you can adapt more easily as the AI ecosystem changes. Additionally, routinely evaluating your tools and components helps you decide when to build solutions in-house or purchase them, ensuring you maintain control over your core intellectual property while keeping external dependencies to a minimum.

Frequently Asked Questions

How long does it actually take to build a custom AI system?

Simple workflow automations using no-code tools can go live in hours. Framework-based systems with multi-step logic take days to weeks. Production-grade systems with custom code, external API integrations, and CRM connectivity typically ship in 15–30 business days—not the 6–11 months an internal hire would need.

What's the difference between an AI tool and an AI system?

An AI tool is something you subscribe to and adapt your workflow around. An AI system is built around your workflow, trained on your data, and integrated directly into your existing stack—so it handles your edge cases instead of forcing workarounds.

When does it make sense to build custom AI versus just using a SaaS tool?

Build custom when the process is strategic—tied to revenue, customer experience, or competitive advantage. Use SaaS for commodity functions like basic email or calendar scheduling where differentiation is low and your data isn't involved.

How do I know if my business has enough data to train a custom AI system?

You don't need a massive dataset—you need the right data. Internal lead scoring criteria, past client conversations, email templates, and legacy system records are often enough to give a custom system a meaningful edge over generic tools.

What happens when an AI model gets updated and breaks my workflow?

This is exactly why production systems should route tasks through an abstraction layer instead of calling AI providers directly. That layer lets you swap models—from GPT-4 to Claude or an open-source alternative—without rebuilding your entire workflow.